A PoC for Contract Testing in the Frontend

Posted | Reading time: 14 minutes and 7 seconds.

Contents

Let me tell you the story of an incident that might or might not have happened in a software development team called “ProductEverywhere” near you.

“ProductEverywhere” provides a card component displaying the information of a product in the platform, which other teams can use in their microfrontends.

Great Product

Some description

EUR 200,00

The card can be used by the teams both as a web component and react component like this:

<product-card

id="12432"

@selected={({detail}) => console.log(detail)}>

</product-card>

Web Component

<ProductCard

id="12432"

selected={(product) => console.log(product)}>

</ProductCard>

React Component

But then, the component needs to change. In the new version they require a campaign id as well. So their component changes to resembly something like this:

<ProductCard

articleId="12432"

campaignId="45333a"

selected={(product) => console.log(product)}>

</ProductCard>

In a monolithic application, understanding where your component is used is somewhat easy. Less so in a distributed microfrontend world. You can create a new version, and thus the teams can update on their time. But this doesn’t help because campaigns might impact the price, and you don’t want different prices based on which version of the component is there.

How will this team figure out who’s using their component, and how?

Another team in the company, the “Suggestors”, has a similar issue. They want to use the ProductCard but want to replace the description with a tagline that explains why you might want to click that one.

They will send the requirement, get an additional property, test that it works and then they will have to hope that three years from now, the “ProductEverywhere"s remember this particular requirement when they rebuild the component from the ground because technology changed.

How can we make sure that this feature will not get lost in the future?

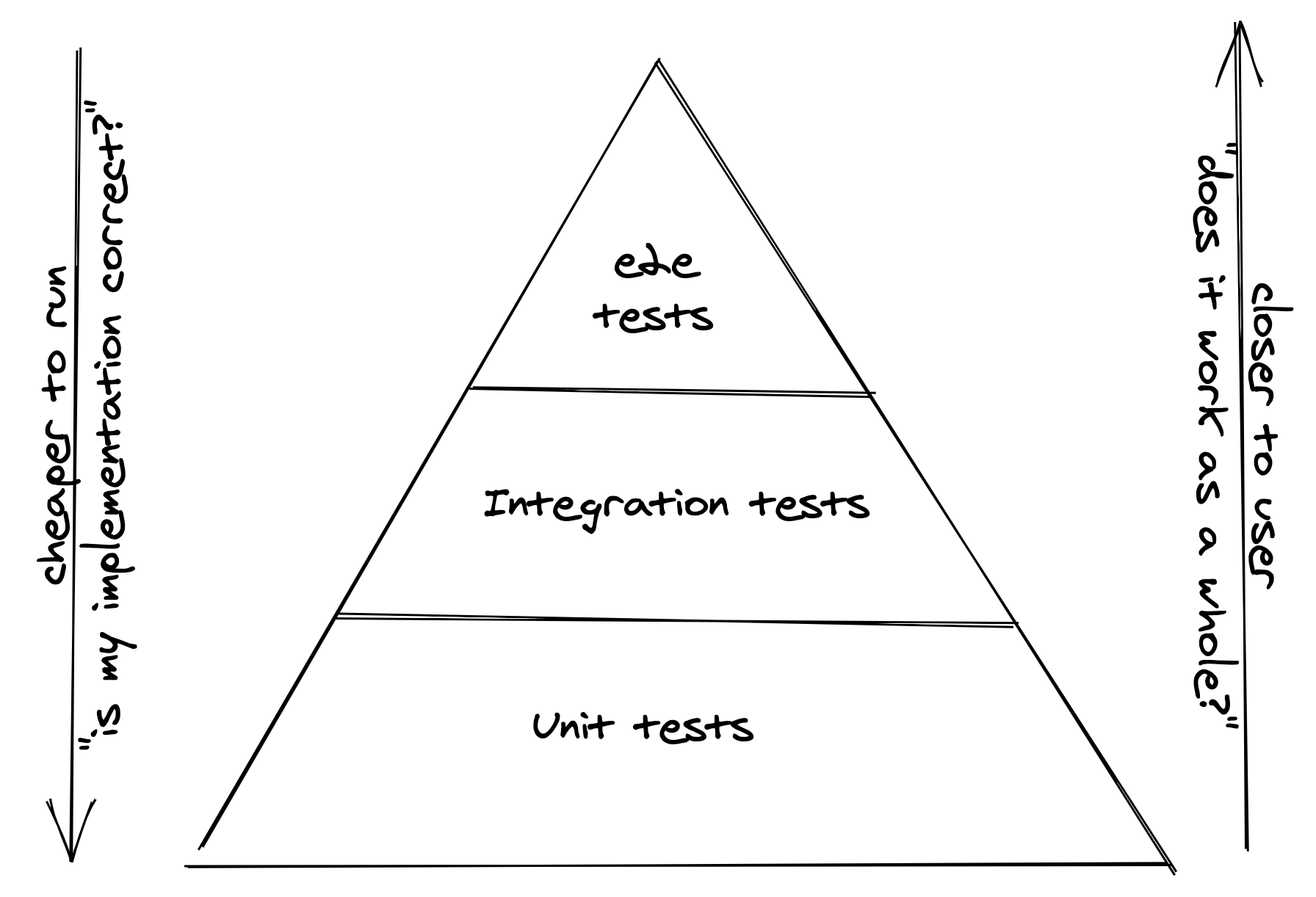

The answers are tests. But which of our tests are helping us here? Let’s take a look at a classic testing pyramid.

A typical test pyramid. Tests at the bottom are cheap to execute, with a focus on verifying the implementation is correct, whereas tests at the top are more expensive (time & effort) and prove that the system works

Unit tests are written by the team that builds the component. While “ProductEverwhere” might have written those for the feature for “Suggestors”, they might still delete the test in the future, not remembering why it was there in the first place. They might add a comment to the commit or code that refers back to the original use case - but maybe the team “Suggestors” doesn’t exist anymore three years from now, and others have taken over the functionality. How to find out if you can delete them?

It would be best if you had either a “don’t delete anything” policy, which on the other hand is a hidden concession you lost control over your source code; or you go with “delete and see who complains”, which is not an excellent way to make friends.

For the team “Suggestors”, the problem is different. They can write a unit test for their feature in the ProductCard component, verifying its behaviour. If the test break, however, there is no way for them to fix it. It’s an integration test, with the same disadvantage you always have with them: Who’s to blame when the test breaks, and what can the team with the failing test do to fix them?

On the upper end of the test pyramid, we have end-to-end tests. Those tests are expensive to run, often brittle, and in a microfrontend environment, ownership of tests covering use-cases that span multiple teams can be tricky.

A Detour to the backend

When I look at existing problems, I often try to understand how others solve them in other environments. For this, I looked into backend development and how it is solved there.

In the backend, the closest analogy to components that are shared is APIs. Just like components, they have a specified input (endpoints with methods and data that consumers can send), some behaviour you expect when calling the endpoint, and a result with a specific format. APIs will change over time, and both producer and consumer of the API will have to make sure they have the tests in place to make sure the system doesn’t break.

There are different ways to approach this issue. With recordings, you store responses for actual calls locally at the consumer and execute tests against those recorded calls. This approach is swift and stable, as all tests are running against local copies of actual calls. However, they don’t provide that much value: as you only verify the test was passing at the point when you created the recording. The API might have changed in the real world, and you are no longer able to call it, but the tests are still green.

Integration tests call the other services. They verify that the request works now, but often they are slower and more brittle, as there are, for instance, networks calls involved. If they break, understanding why they fail can take many resources.

The solution that gained some traction in the API-driven backend world were Contract Tests.

In a nutshell, contract tests combine the benefits from recordings, unit tests and integration tests. I like to introduce them as recorded tests, where the recording is shared with the API provider, who commits to running the test that tests the recording with their APIs as Unit Tests, and compares the result to the recording and ensure that the recording is still valid.

+-------------+ +-------------+

| | | |

| Consumer | | Provider |

| | | |

+-----+-------+ +------+------+

| |

+-----------+ |

| create | |

| test | |

+-----------> mocked |

| component |

| |

| send contract |

+----------------->

| |

| +----------+

| | run unit |

| | test to |

| | verify |

| | mock |

| <----------+

| |

| |

The advantage over test recordings running at the consumer is that the provider runs the tests and verifies that the recording is valid. As contract tests go, they have an additional feature that gives the consumer a benefit similar to integration tests: they can see if the test is currently passing for the provider. This is called a broker.

+-------------+ +-------------+ +--------------+

| | | | | |

| Consumer | | Broker | | Provider |

| | | | | |

+-----+-------+ +------+------+ +-------+------+

| | |

+-----------+ | |

| | | |

| create | | |

| test | | |

| | | |

+-----------> mock | |

| component | |

| | |

| publish contract| |

+-----------------> |

| | |

| | receive contract|

| +----------------->

| | |

| | test fails +------------+

| <-----------------+ |

| | test fails | implement |

| <-----------------+ contract |

| get test result | | |

+-----------------> <------------+

| | test passes |

| <-----------------+

| get test result | |

+-----------------> |

| | |

| | |

This flow allows the consumer to get the result of the test but in an async matter. If they need new features (for instance, the “Suggestors” need to replace the description), they can create their expectations and know when they can release the new feature.

So much for the theory. Let’s see how we can apply that to the front end.

Contract tests for components

Contract tests in the API usually consist of the following parts:

- A state is defined that the system should have (state)

- The call to the API is described (input)

- The response is asserted (output)

Looking at the frontend, the first thing that increases the complexity is a simple fact:

Things we do in the frontend often have two consequences: the executed business logic and the feedback as part of the usability experience

This change is a significant distinguishment from the backend, where we can focus on business logic. As an example, let’s look at the campaign id earlier. When the consumer sets the campaign id, it is not enough to apply and hold the discount to the total price - it is just as important to show the discount so that the user can easily understand the value.

The other thing is behaviour. If we click a button in an element, we might expect that an event is triggered. But there might be additional constraints like text boxes above the buttons being validated or cleared.

As for APIs, the definition is pretty straightforward; we can define the test in a JSON. In the front end, based on the complexity we have, this is much more difficult. Luckily, efficiently testing this combination has been solved - with the testing-library resembling the way your software is used.

For the approach I have chosen in this Proof of Concept, we will use the definition to create a test that can be executed and shared.

Consumer: Creating the contract definition

We are back at the team “Suggestors”. As they want to create a contract for the other team now, they closely consider what they need from the other team.

First, they want to change the description and provide some text from the outside.

Secondly, they want to track if and which product was opened from this component.

We can specify two requirements: We want to pass the label’s information to the components and receive the product id from the event the element is triggering.

In the definition block, this is defined as given block, and in our case, it looks like this:

const given = {

props: {

label: "You see this because you love teapots!"

},

events: {

"selected": { id: "1234" }

}

}

Thus we specified the shape of the component we expect. When talking to “ProductEverywhere”, they will then be able to tell us that we can’t use the component like this - we have to pass the id of the article from the outside, and thus change the definition to:

const given = {

props: {

+ id: "1234",

label: "You see this because you love teapots!"

},

events: {

"selected": { id: "1234" }

}

}

They will also tell us that the selected event doesn’t return the id only, but the complete product. For “Suggestors”, however, this is not relevant; they only care about the product id.

The definition soo far only contains information about how the interface to the component looks, not how it behaves when we use it that way. This is our next block, defining the when.

To define it, we will have to think about what we expect the user to see and do with the component. The when block allows us to define multiple nested steps to describe the user interaction how we know it from the testing-library via queries and user-events.

const when = [

// What is the user seeing?

{

type: "query",

action: "getByText",

args: [

/You see this because you love teapots/gi

]

},

// What are we the user expecting to do?

{

type: "userAction",

action: "click",

args: [

{

type: "query",

action: "getByRole",

args: [

"button",

{ name: /open/i }

]

}

],

// the event we expect the action to trigger

triggers: "selected"

},

]

With this, we have defined the user journey. The last part is asserting that the component’s output after the user journey is as we expected. This we can do with a then block that allows us to specify asserting blocks for the events we have defined or network calls we anticipate:

const then = [

{

type: "eventListener",

// matches the name of the event we defined in given

name: "selected",

assert: (selected: jest.Mock) => {

expect(selected).toBeCalledTimes(1);

expect(selected.mock.calls[0][0].id).toEqual("1234");

}

}

]

The event listener attaches a jest mock on the event triggered and can verify the event is exposed as we need. Since we are only interested in the id, we compare only this one element.

At last we set up the definition with the consumer, provider and the element under test, and add our blocks to it:

const definition = {

consumer: "Suggestors",

provider: "ProductEverywhere",

element: "ProductCard",

given,

when,

then

}

This definition we can now use to create our contract:

await testForReact(document.createElement("container"), definition, { url: urlForBroker })

With this line, a few things happen.

- From the definition, a test is created and executed to ensure it is valid.

- The test is sent to the broker, which checks if the contract already exists.

- If the contract exists, the definition is updated for the latest version; otherwise, a new contract is created.

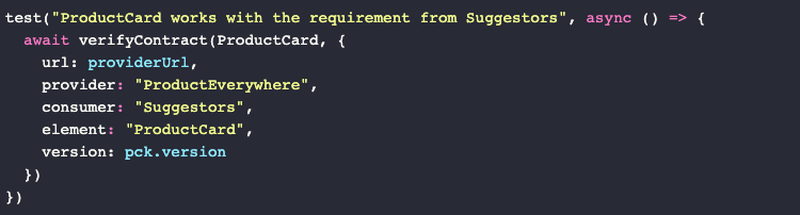

Provider: Implementing the requirement

As “ProductEverywhere” got the notification that there is a new contract available for their SearchForm, they include another test in their project.

test("ProductCard works with the requirement from Suggestors", async () => {

await verifyContract(ProductCard, {

url: providerUrl,

provider: "ProductEverywhere",

consumer: "Suggestors",

element: "ProductCard",

version: pck.version

})

})

When they run their tests now, this specific test will do the following things:

- Download the contract from the provided URL

- Execute the test against

ProductCard. - Publish the result of the test for the packages current version

In this case, the test will fail and upload the result with the current version to the broker. The “Suggestors” will be able to see that the required functionality is currently not supported, and “ProductEverywhere” can create a story to implement the test.

The failing test will return a result that looks like this:

TestingLibraryElementError: Unable to find an element with the text:

/You see this because you love teapots/gi.

This error message alone does not help to understand what the other team wants, so there is another feature that allows the provider to understand the requirement that the other teams have. In the contract broker, they can take a look at the actual test that is going to be executed against their component, which roughly looks like this:

const React = require("react");

const { screen, render } = require("@testing-library/react");

const { default: userAction } = require("@testing-library/user-event");

module.exports = {

contract: { consumer: "navigation", provider: "search", element: "SearchForm" },

expectedContract: async (container) => {

const selected = jest.fn();

render(React.createElement(container, { id: "1234", label: "You see this because you love teapots!", selected }))

const block_0_0 = screen.getByText(/You see this because you love teapots/gi);

const block_1_0 = screen.getByRole("button", { name: /open/i });

const block_1 = userAction.click(block_1_0);

(function (selected) {

expect(selected).toBeCalledTimes(1);

expect(selected.mock.calls[0][0].id).toEqual("1234");

})(selected)

}

}

They can see that the other team seems to expect a property label that contains the text they passed as a label. After adding the property and adding the element to the page, the test is green, and the “Suggestors” know the feature is there.

Tradeoffs

As you may already assume, the specification leaves a bug/feature that the team might ship to production. The definition does not fulfil the other team’s original requirement: to replace the description with the label.

Great Product

Some description

You see this because you love teapots!

EUR 200,00

The test can be only as good as the specification, and the more complicated the component, the more complex the definition has to be. And not all requirements can be easily written in text.

The more complex the components get, the harder will it be to get the definition correct. And the longer the tests are, the trickier it will become for the teams to understand what the other team needs. This can be reduced by social interaction, but it won’t make it easier for developers ten years from now to comprehend the original intent.

And there are a few cases that you cannot test at all. It is also important to note that this will only test behaviour, not how something is displayed.

Overall, I’m not sure how well this will perform in different real projects and would love to hear feedback.

Bonus use-case: Changing the component as the provider

“ProductEverywhere” still has one change ahead of them: Adding the campaign id. Therefore the given block of all consumers needs to change for the tests to work.

const given = {

props: {

- id: "1234",

+ productId: "1234",

+ campaignId: "abcd",

},

}

When they apply the change in their component and update the version in their package, the tests of all contracts will fail. Therefore, all other teams will see a breaking change ahead but continue to use version 1.0.0 as the contract is still valid for this version.

Once the provider has released a new version, they can inform the consumers about the changes, mark the old version for deprecation, and precisely track when the updated contracts of their consumers come in, and when all tests pass, they can stop supporting the old version.

+-------------+ +-------------+ +--------------+

| | | | | |

| Consumer | | Broker | | Provider |

| | | | | |

+-----+-------+ +------+------+ +-------+------+

| | |

| | test passes for |

| <-----------------+

| | version 1.0.0 |

| | |

| | test 1.0.1 fails+------------+

| <-----------------+ |

| | test 1.0.1 fails| implement |

| <-----------------+ component |

| verify test for | | v1.0.1 |

+-----------------> <------------+

| 1.0.0 pass | test 1.0.1 fails|

| <-----------------+

| go talk to the other team |

<-----------------+-----------------+

| | |

+-----------+ | |

| | | |

|create | | |

|test | | |

| | | |

+-----------> mock | |

| component | |

| | |

| update contract | |

+-----------------> |

| | test passes |

| <-----------------+

| | |

| | |

This will not solve any concurrency issues, so to orchestrate the release, “ProductEverywhere” will have to add a feature toggle to the component to switch the campaign on once all contracts are green.

Unlike in the integration they had without the contracts, they have a single point in their contract broker to get the information if all consumers of their component have updated their dependencies.

Show me some code

To get a better feeling if contracts for components would work at all, I created a demo repository you can find here: github.com/MatthiasKainer/contracts4components

With this project comes the web library that allows you to run tests against web component and react libraries and a server that acts as a contract broker.

You can find examples for the components in the folders:

Web Component Examples

Consumer: contracts4components.web/test/dom/consumer/test.ts Provider: contracts4components.web/test/dom/consumer/test.ts

React Examples

Consumer: contracts4components.web/test/react/consumer/test.ts Provider: contracts4components.web/test/react/provider/test.ts

You can find the server in the folder contracts4components.server is available as a docker container and will be used in the tests automatically.